Most of us who started off operating computers, and later networks, had to also become the first facilities engineers for the rooms that housed our toys. All over the United States in the 1990’s various broom closets, unused offices, and basement storage rooms became data centers for the emerging client-server (and subsequent dot com) explosion.

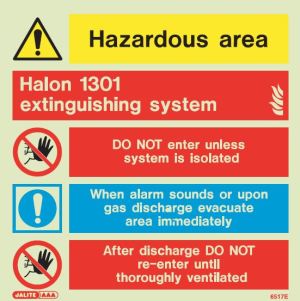

We all had to learn the intricacies of power distribution, cooling, rack spacing and placement, physical security, fire suppression, alarming and alerting, safety, and even how to organize our data centers for showing off to our new clients and prospects.

My first foray into managing such facilities was as a sysadmin (and later operations director) for a dot-com company that produced news and stock market information. We had an office in a warehouse district building in Minneapolis that was over one hundred years old and had, at one time, been used as a parking garage for a Ford plant to park new trucks in.

Needless to say, this building was not engineered to be a data center by any stretch of the imagination. Our office on the third floor became a data center by virtue of necessity, and many changes to the building had to be made to accommodate our thirst for processing power. The original building electricity was fed by an ancient, and massive, 115 volt utility feed (versus the more common 230 today). We had to have a 480 volt power system brought into the building, all whilst trying to preserve the historical significance of our former warehouse. The first day crews came in and started boring 16″ holes in the concrete floor for a new power distribution system, I was thinking, “What have I gotten myself into?”.

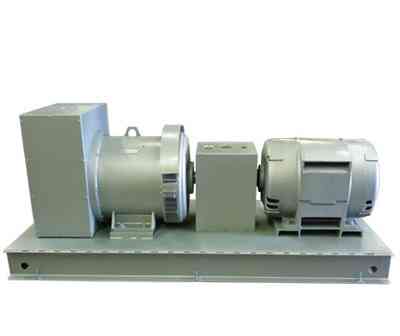

After much discussion, and days of analysis on our needed power capabilities, we decided on an APC Silcon UPS system. At the time (and to this day), this system was designed for ultra-high availability, and based upon our calculations it would run the data center for around 90 minutes without power. Now this seems like not much time, and it isn’t, but this 90 minutes would allow us time to perform an orderly shutdown of the data center and transfer processing to our backup data center–which is all we needed.

After having the UPS system installed, we determined that we needed to test the system regularly while under actual load, which for us meant during stock market hours. We decided that the first Friday of the month, in the early afternoon, we would test the UPS by shutting off the incoming power feed to simulate a power failure. The procedure would then be to monitor how much runtime the UPS had, and monitor for situations such as a bad battery in the UPS or other conditions.

As the leader of the team, it was my responsibility to flip the switch. There were three switches, one for the incoming power feed, one for the power feed leaving the UPS to feed the data center, and a switch in the middle to bypass the UPS and feed the data center directly from utility power. For this test, the left-hand knob was turned with a satisfying “thump”. The UPS would begin alarming that it was on battery power, we would take our measurements, and I would then restore utility power by closing the switch.

Now, this test was not without consequence, and we all knew it. An abrupt shutdown of our data center would bring our business to a halt, and we had service level agreements with clients that required us to pay them if we had excessive unavailability of our services. Because of the seriousness of this test, I would send an email out to the IT department prior to the test. The email went something like this:

“Team, we are about ready to execute the monthly UPS system test. If the test goes as planned, you will not notice anything. If the test does not go as planned, you will likely get an email with my name in the subject line, indicating that I don’t work here anymore.”

The email always brought about a laugh from the team, and one August day, turned out to be nearly prophetic. As I walked into the data center to begin the test, I failed to look at the small display on the front of the UPS system. As it turns out, the UPS had experienced a failure of a couple of $20 fuses that connected the battery cabinet to the UPS controller, and this small two-line LCD display would have told me of this condition had I looked at it-but I didn’t.

I walked up to the switch, dutifully turned it, and the deafening sound of a silent data center was instant. Data center down. I stood by the panel for what seemed like an eternity just as the rest of the team was walking in to investigate. We were all horrified.

We began the slow procedure of bringing systems back online, and this had to be conducted in a particular order: Network switches first, then routers, load balancers, domain controllers, databases, application servers, and finally web servers. The process took several hours.

Afterwards, I walked into the office of my boss (his name was Jamie). With a lump in my throat, I explained to him what I did and that I realized that I didn’t observe the control panel on the front of the UPS before flipping the switch. I asked if he was going to fire me. With a smile on his face, he replied, “No, why would I do that? I just invested hundreds of thousands of dollars into your education.” It was a very compassionate moment from a man who had every reason to be upset with me, and I was (and am) very grateful for his kindness during my mistake.

I made my way back to my computer after one of the most terrible and exhausting days. Upon opening my email box, there was a “reply all” to my original test announcement from one of my coworkers. It was one line:

“How’d the test go?”

My response:

“The UPS test is complete. No further testing is required at this time.”

There is no sound louder than a quiet data center.

Once successfuly compiled and tested, the binary would be transferred to one of the disk packs where compiled business programs resided. And the source code would be checked in to the program library.

Once successfuly compiled and tested, the binary would be transferred to one of the disk packs where compiled business programs resided. And the source code would be checked in to the program library. The university had perhaps a dozen IBM 029 card punch machines that could be used by students. It resembled a typewriter that was built into a desk, with card reading and punching apparatus up on top. It took quite a bit of practice to really understand the 029 and be productive on it.

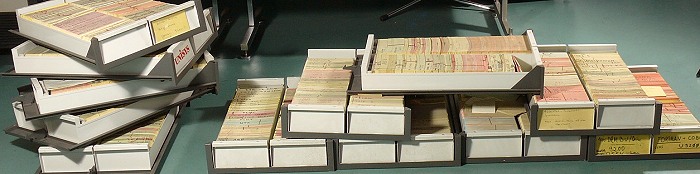

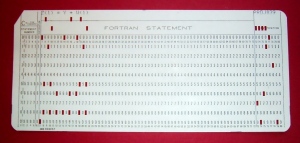

The university had perhaps a dozen IBM 029 card punch machines that could be used by students. It resembled a typewriter that was built into a desk, with card reading and punching apparatus up on top. It took quite a bit of practice to really understand the 029 and be productive on it. Punch cards have 80 columns. Each column is a character. The punch card in the illustration here is a single line from a FORTRAN program. If you had a big FORTRAN program, then you had a big deck of cards. If you had a program with hundreds or thousands of lines of code, then chances are you had one of those metal trays used to securely hold a card deck.

Punch cards have 80 columns. Each column is a character. The punch card in the illustration here is a single line from a FORTRAN program. If you had a big FORTRAN program, then you had a big deck of cards. If you had a program with hundreds or thousands of lines of code, then chances are you had one of those metal trays used to securely hold a card deck. But Ralph had an entirely different answer for Rose. He told her, “If the fire alarm sounds, you need to pull the backup tape list, grab all of those tapes and take them with you as you leave the room. But if backups have not been done yet, you need to run them and then take all of the tapes with you.”

But Ralph had an entirely different answer for Rose. He told her, “If the fire alarm sounds, you need to pull the backup tape list, grab all of those tapes and take them with you as you leave the room. But if backups have not been done yet, you need to run them and then take all of the tapes with you.”

Just then, the division chief came in and asked what had happened. We all turned to Mike, who fessed up. None of us knew how to reset the emergency off button, but Ted, the division chief, was familiar with it and showed us how. He reset the switch, and the data center roared to life. We booted the PDP-11’s and all was right with the world.

Just then, the division chief came in and asked what had happened. We all turned to Mike, who fessed up. None of us knew how to reset the emergency off button, but Ted, the division chief, was familiar with it and showed us how. He reset the switch, and the data center roared to life. We booted the PDP-11’s and all was right with the world.